FlashToken

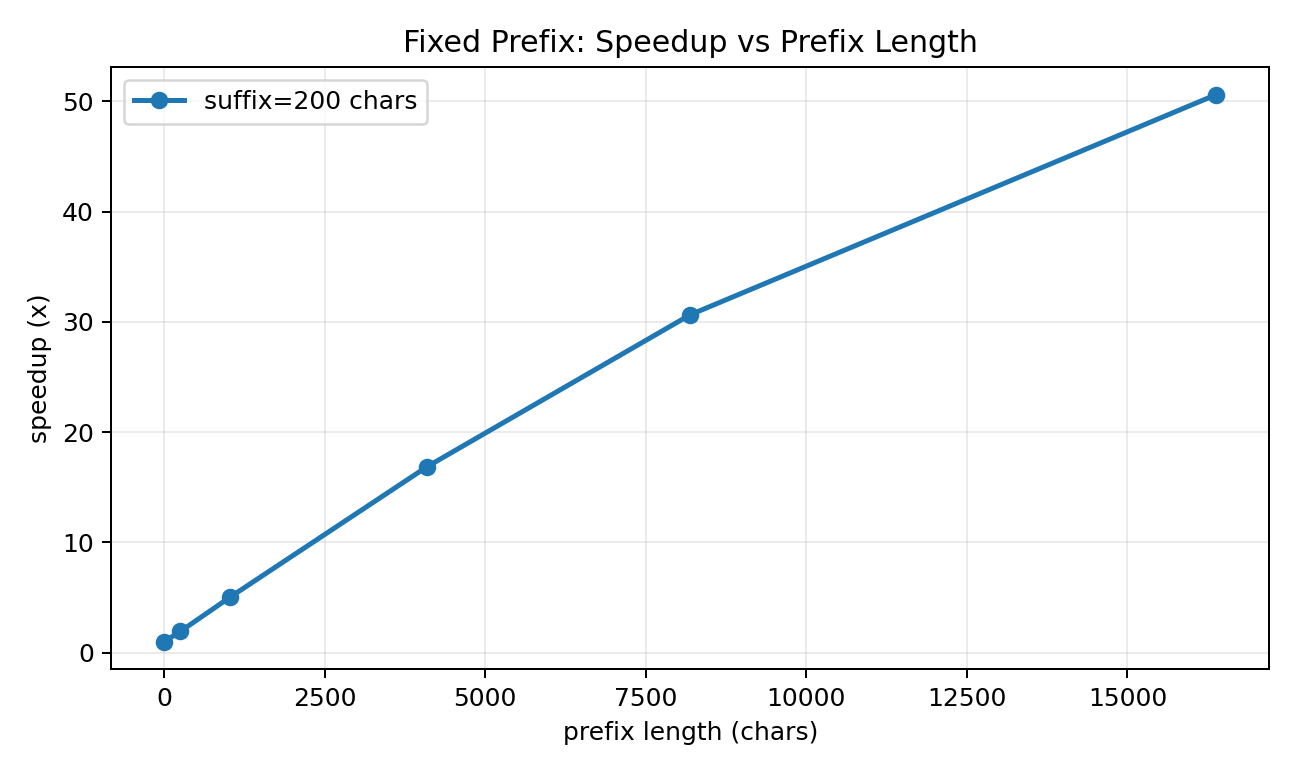

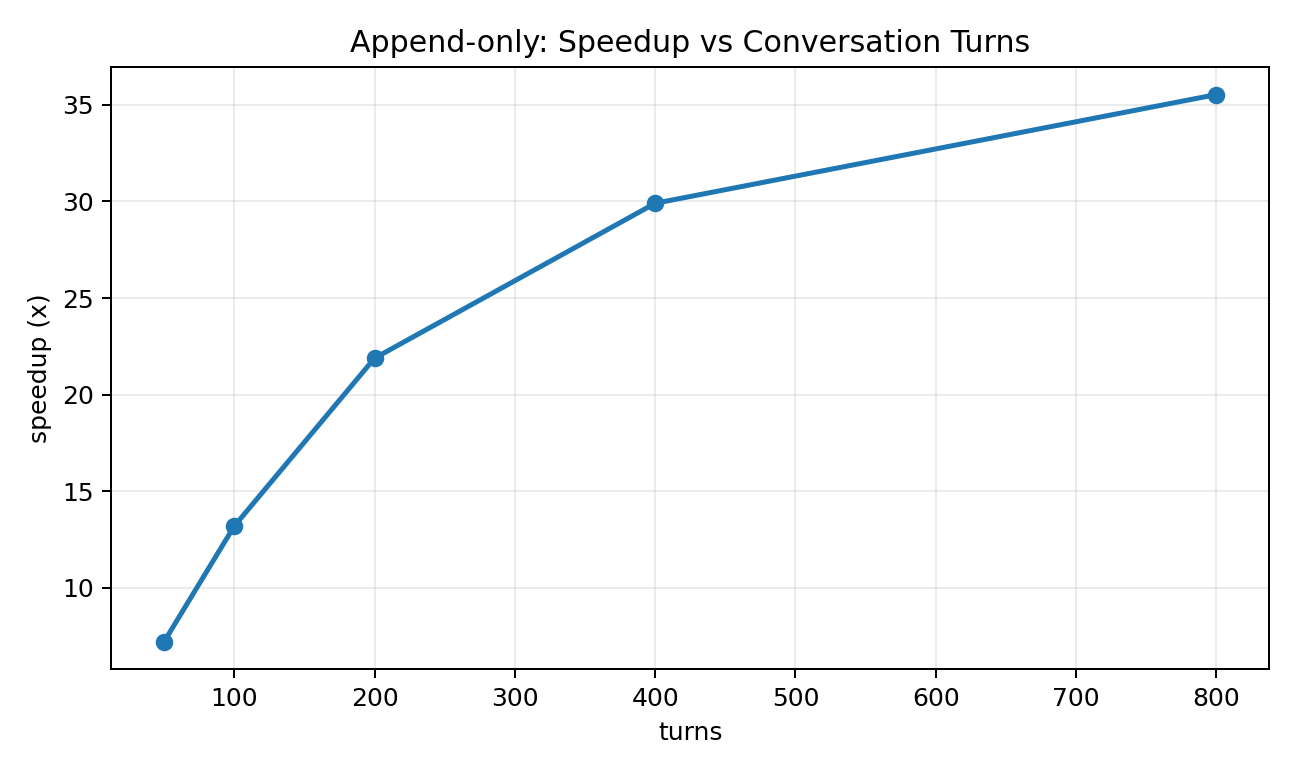

Tokenizer-side prefix caching for low-latency LLM systems, with 27x-37x speedups on reusable prompts.

FlashToken speeds up tokenization without changing model weights. When prompts share long prefixes (system prompts, templates, conversation history), FlashToken avoids re-tokenizing the same text over and over.

Highlights

- Correctness:

mismatches = 0(token-by-token equality withtiktoken.encode_ordinary). - Speed: 27×–37× speedup on long-prefix reuse / append-only chat benchmarks.

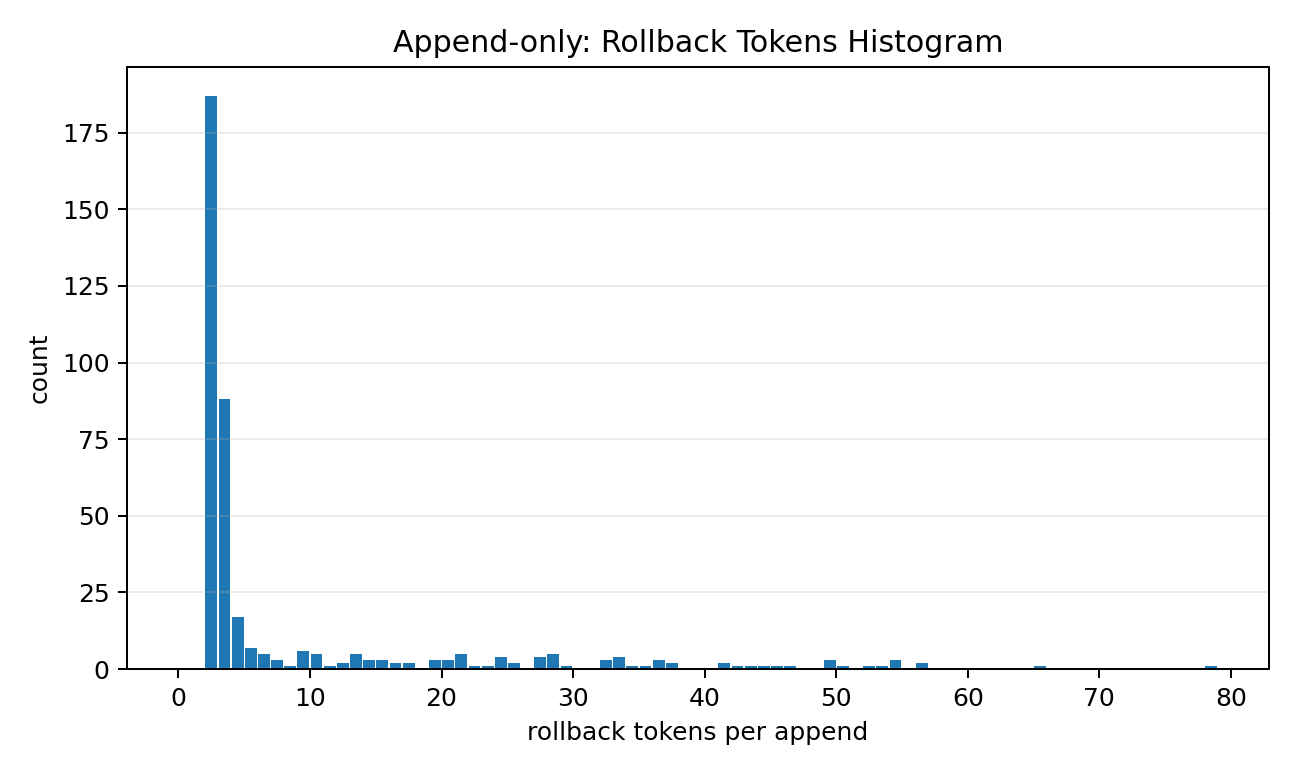

- Two strategies: fixed-prefix reuse and append-only delta tokenization (KV-cache friendly).

Quickstart

import tiktoken

from flashtoken import FixedPrefixTokenCache

enc = tiktoken.get_encoding("cl100k_base")

cache = FixedPrefixTokenCache(enc, prefix="SYSTEM: ... long ...\n")

tokens = cache.encode_ordinary("User: hello\n")